Designing for Genuine Inquiry: The Syzygy Cluster Design Model

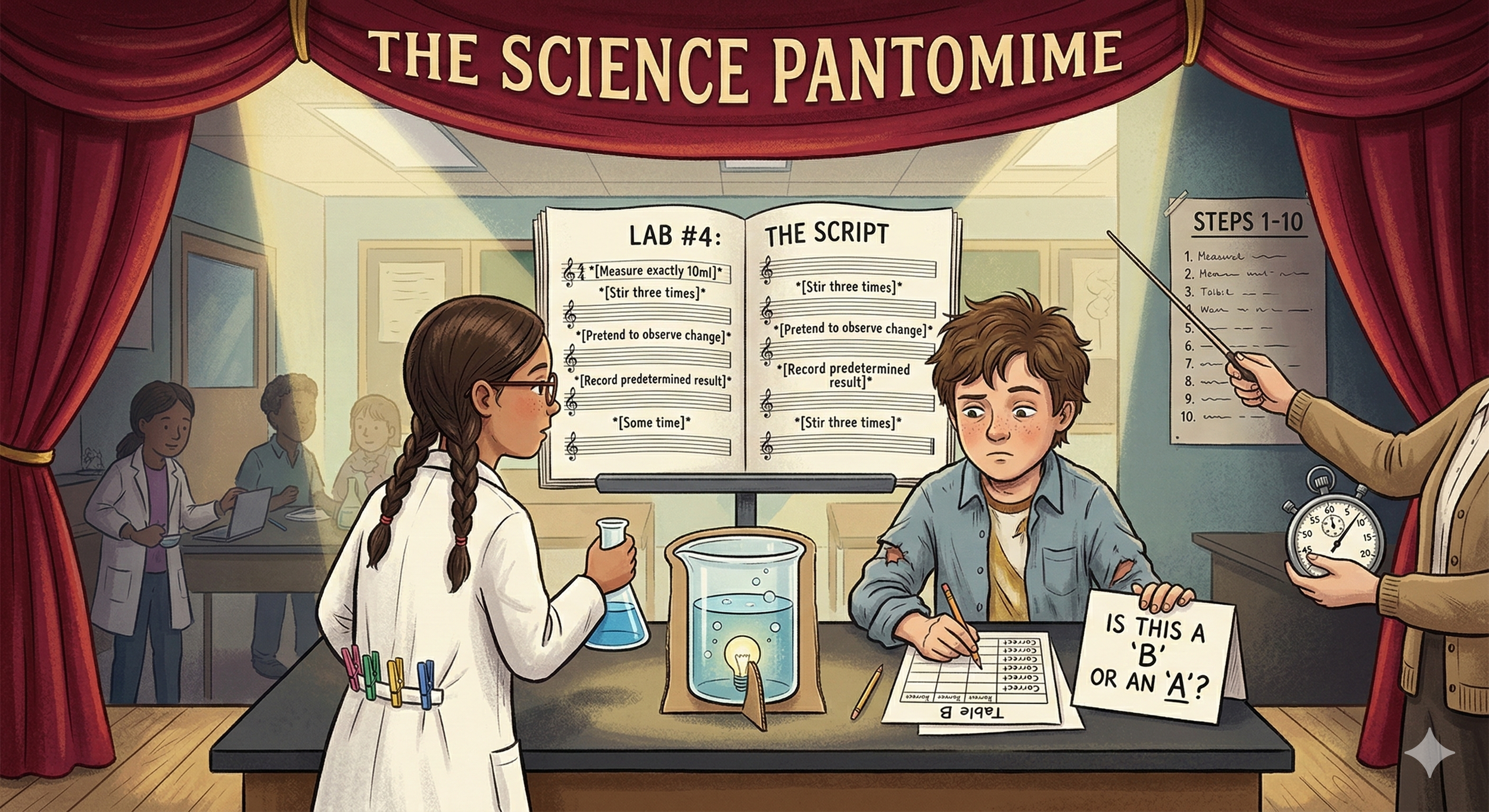

Science pantomime occurs when students go through the motions of inquiry without engaging in authentic investigation. They follow prescribed steps, complete tables, and arrive at expected answers. Ultimately, when asked what they have learned, many offer little evidence of understanding—sometimes merely repeating answers without comprehending the underlying concepts.

A framework doesn't arrive fully formed. This one started with a forensics class and a question I couldn't stop asking.

A year ago I set out to build three laboratory inquiry books — one for Biology, one for Physics, and one for a course I was calling Nonviolent Forensic Science. The forensics idea came from a frustration with existing materials. Most forensic science curricula lean hard into the sensational. I wanted to teach the science of forensics without glorifying what forensic science is sometimes called to investigate. Blood spatter, toxicology, trace evidence — the science is rich and genuinely compelling on its own terms.

But as I started designing those lessons I ran into a problem that had nothing to do with forensics. I had no consistent model for building an investigation. Every lesson made its own rules. Some were more open than others. Some aligned to NGSS standards, some didn't. If I was going to build something scalable enough to fit real classroom schedules and rigorous enough to produce genuine learning, I needed a framework first.

That question sent me somewhere I didn't entirely anticipate.

Starting With Clusters

I was familiar with how New York State uses the term "cluster" in its science assessments. In the NYSSLS framework, a cluster is a group of data artifacts organized around a specific phenomenon. The cluster presents students with layered evidence and asks them to interpret it, apply concepts, and build explanations across all three NGSS dimensions. It is a sophisticated assessment tool, carefully scaffolded toward a specific Performance Expectation.

I kept asking myself: what would a cluster look like if it wasn't an assessment?

What if the data artifacts weren't selected to scaffold a student toward a predetermined conclusion, but to genuinely open a phenomenon to investigation? What if the student chose the lens and the tools rather than the assessment designer? What if the cluster was the inquiry itself, rather than a measure of whether inquiry had previously occurred?

That question didn't have an obvious answer. So I started following it.

What Genuine Inquiry Actually Requires

Genuine inquiry has specific requirements that most classroom investigations quietly violate.

The phenomenon has to resist easy explanation. Not artificially, but genuinely. A phenomenon a student can explain in thirty seconds without investigating isn't a phenomenon. It's a prompt.

The evidence has to be rich enough to support multiple valid pathways. If there is only one legitimate route through the investigation, students aren't choosing. They're following. I started designing Evidence Packages with up to twenty pieces of evidence, deliberately including material that conflicts, complicates, and occasionally dead-ends. Real scientific data is like that. A student who has never sat with contradictory evidence and had to make a judgment call has not done science. They have filled out a table.

The student has to own the investigative lens and the tools. The NGSS gives us a rich vocabulary for both: Crosscutting Concepts as lenses, Science and Engineering Practices as tools. But in most classrooms these are assigned, not chosen. Paul Andersen of The Wonder of Science put it simply: "Don't kill the wonder and don't hide the practices." Assigning the lens kills the wonder. Assigning the practice hides it. In genuine inquiry, the phenomenon and the evidence tell the student which lens to reach for. The teacher's job is to design conditions rich enough that those choices emerge naturally.

The student also needs a structure for moving through the investigation without being directed toward a conclusion. I settled on a cycle I call ETR-C: Explain your current thinking, Test it against the evidence, Revise it when the evidence pushes back, Communicate it, then start again. The revision step is the critical one. Not adding vocabulary to an unchanged idea, but genuinely rebuilding an explanation when the evidence demands it. That is where the learning lives.

The Assessment Problem

Genuine open inquiry and NGSS Performance Expectations pull in different directions. Open inquiry asks students to choose their own CCC and SEP. Performance Expectations fix them. Open inquiry evaluates reasoning quality. PE assessment evaluates three-dimensional achievement against a specific standard. Open inquiry uses the cluster phenomenon as the context. PE assessment requires a brand new scenario to test whether understanding genuinely transfers.

These are not compatible within a single assessment event. But they are compatible within a single instructional arc, if you keep them clearly separate.

The solution I arrived at is the Breakpoint. The open inquiry of the Syzygy Cluster runs its full course. Then the terrain narrows. The Breakpoint is where the investigation converges onto a specific PE, a specific scenario, and fixed dimensions. The student crosses it independently, without scaffolding, in a context they have never seen. The question it answers is not "did the student follow the investigation?" It is "did the student build understanding durable enough to travel?"

The Openness Problem

As the framework developed I kept returning to the same question: how open is open enough?

Openness is not about removing all structure. It is about removing predetermined conclusions while keeping the conditions for genuine reasoning intact. Syzygy lessons are designed with soft borders. The boundaries between Disciplinary Core Ideas are permeable by design. A student investigating a life science phenomenon might reason their way into earth science or physics. A dead end is not a failure. It is a data point, and learning to recognize one is itself a scientific skill.

Every Syzygy Cluster is built with a PE Constellation, a teacher-facing map of all the Performance Expectations within the DCI that a student might legitimately reach. It is not a fence. It is a map of where genuine inquiry might naturally travel. When a student's work lands on a PE that wasn't part of the lesson plan, that is not a surprise to correct. It is evidence that the inquiry was real.

Where This Stands

I began with a forensics class and a need for a consistent design model. What I arrived at is a framework I did not entirely anticipate when I started, one that has been revised, tested, and revised again. Version 9 of the Syzygy Cluster Design Document will be released shortly, along with a first sample lesson. Both are still in draft form. That feels appropriate. A framework for genuine inquiry should probably not pretend to be finished.

I am also building an app that takes teacher prompts and existing canned labs and converts them into open inquiry models aligned to the Syzygy framework. It is not perfect yet, but it is already producing reliable first draft lessons. If you have a lab you love that doesn't quite meet the inquiry threshold, this is being built with you in mind.

Don't kill the wonder. Don't hide the practices. That is still the inspiration. This framework is my attempt to give teachers the tools to meet it.